OVERVIEW

Our experience makes us a global leader in Internet based data production.

Snowcap has developed a cost-effective advanced technology to extract and structure any Internet data. Over a span of ten years, we have been helping businesses to gain advantage by making intelligent choices based on real-time information.

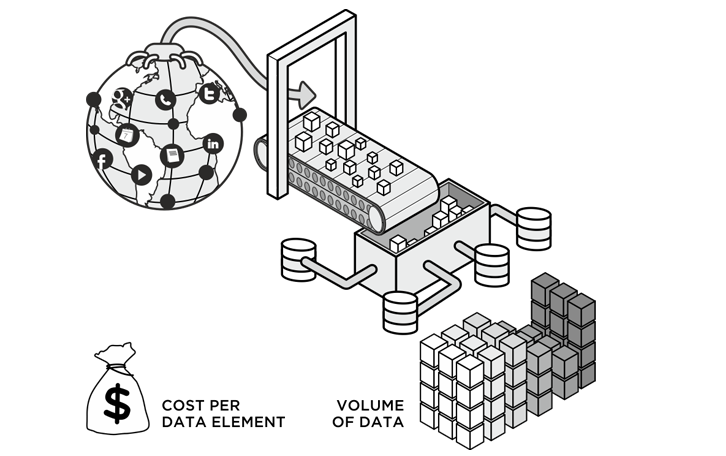

We deploy our proprietary web crawlers and utilize our state-of-the-art extraction programs to mine data from most public sources within the Internet, it could be once or “n” times, with ever expanding scalability. Our web crawlers, data mining technologies, parsing modules, aggregation and other production systems are the best in industry and improve as technology matures to handle new sources across various industry verticals.

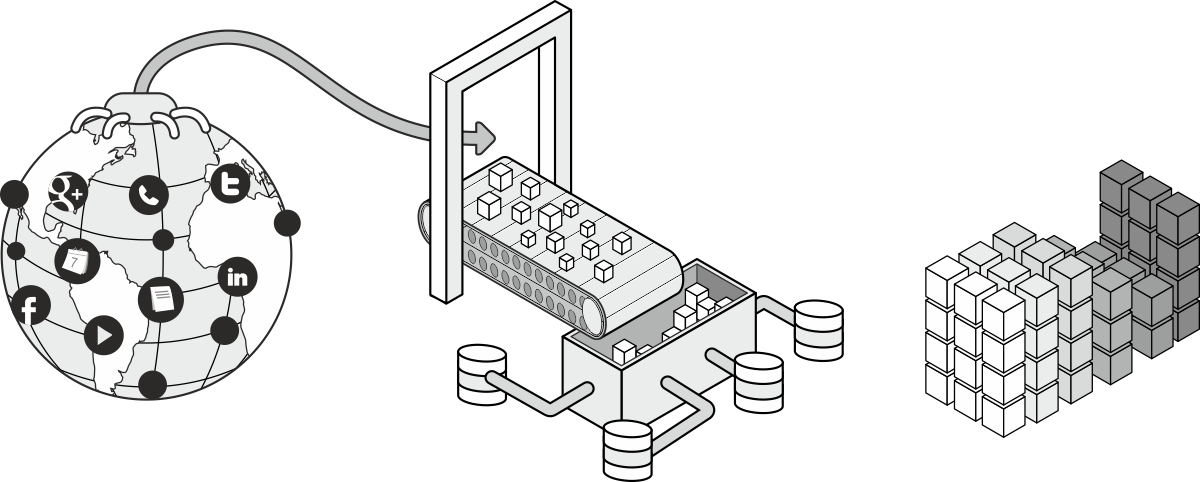

Production Process

Search simplified for all your business applications & intelligence.

COLLECTION

Our web crawlers use sophisticated algorithms to mine webpages from surface and deep web. The crawlers visit the URL, identify all the hyperlinks in the page and add them to the list of URL’s to visit. The crawlers then follow those pages for new links, recursively. Our web crawlers begin to gather all the data from various Internet sources.

EXTRACTION

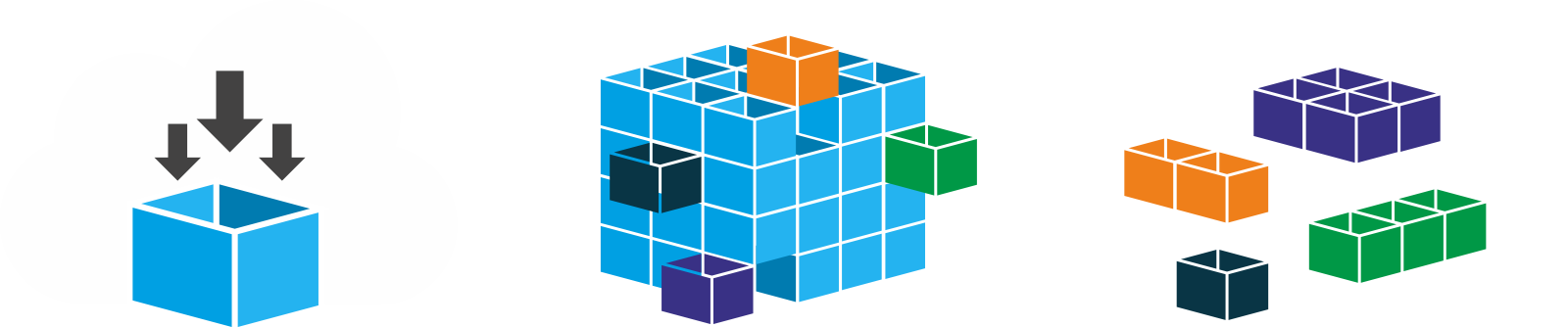

We extract data by building sophisticated parsing technology (modules) to provide context to unstructured data delivered by the web crawlers. We can readily determine scale and efficiencies. We offer customizable “off-the-shelf” technology modules.

CLASSIFICATION & DELIVERY

After the data is collected and extracted, our final production stage is Aggregation, Filtering, Cleansing, Matching and Interpretation to prepare consumable data. We deliver the data in the required format.

VALUE PROPOSITION

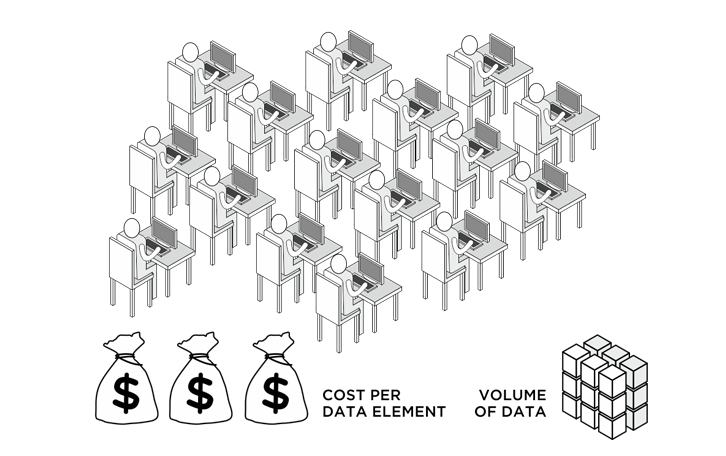

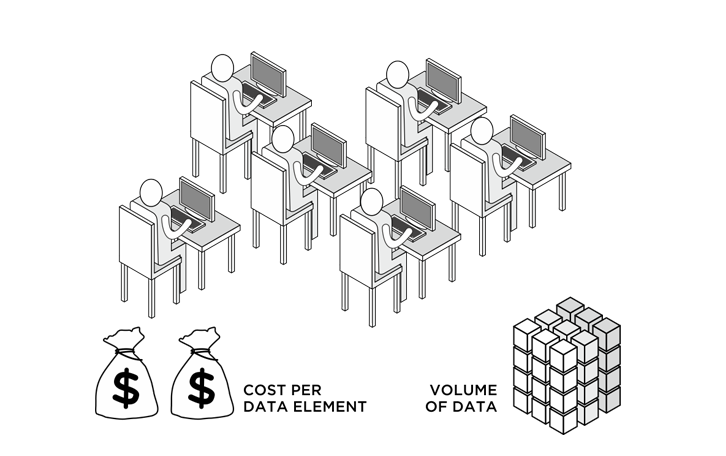

Our technology delivers cost-effective results by eliminating the manual method of index searches using popular search engines. Our crawlers and parsers are programmed to work as real-time information units extracting information from millions of records at high speed in the shortest span of time from any available source within the internet.MANUAL METHOD

SCRIPT EXTRACTION

SNOWCAP TECHNOLOGY

Our Web Crawlers

- Are trained to prioritize volumes of web pages to find the best sources for crawling

- Have the capability to extract data from deep web sources which include dynamic, limited access, scripted, non HTML or text content residing in domain specific databases and private or contextual web

- Can process multi-media, images, video and audio content which are now an integrated part of websites

- Are designed to recognize changes in web pages to report any information that has been added or removed since the initial crawl

Quick To Implement

“Off- the- Shelf” modules to accelerate Data ProductionThe digital universe continues to expand with the data in it doubling every two years. This combined with the fact that 90% of all data on the web is semi-structured or unstructured, quick and cost-effective data production becomes a crucial factor in today’s business. Speed is contingent upon having technology that automates the harvesting of unstructured Internet data into production environment to generate actionable data. Snowcap Data success originates from our own data production technology which operates within a highly proprietary and flexible environment.

- Proprietary web crawling technology

- Ten years of experience parsing data from various media formats and data elements from web pages

- Multiple “off-the-shelf” modules for data parsing

- Proprietary scoring systems for data enrichment